We’ve made it to the end of first term and are starting in to second term at Walden School. In our astrobiology class, the students have studied in detail the formation and evolution of the Moon according to best evidence as well as the history of lunar exploration and the Apollo program.

The students have drawn up storyboards of the animation we’re developing for the Center for Lunar Origin and Evolution. One of these storyboard frames is shown below. We will now pass these over to my 3D modeling class, who will soon start the process of planning and developing the models and scenes necessary to make the animations work. The multimedia students will then do the final assembly and special effects/post production work.

In the meantime, I have been working on ways to get the Moon and Mars 3D elevation data to work in my favorite 3D modeling program (Daz 3D Bryce). If I can get the data into a grayscale image, then I can turn it into a 3D terrain in Bryce. I’ve discovered that the LOLA (Lunar Orbiter Laser Altimeter) data from the Lunar Reconnaissance Orbiter and the MOLA (Mars Orbiter Laser Altimeter) data from Mars Global Surveyor can be imported directly into Adobe Photoshop using the Photoshop Raw format (as long as I know the exact size of the .img file). But I’ve encountered a problem: Photoshop has problems with the positive and negative altitude data, as there isn’t any such thing as a negative color. So the high areas are showing up as dark colors and the low areas as high colors, with the Lunar and Martian mean elevation (like sea level on Earth) represents the breaking point between.

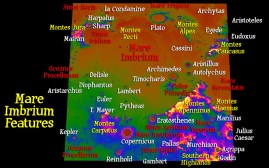

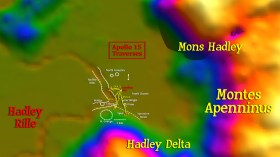

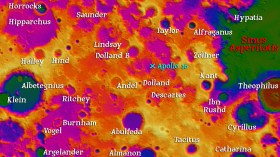

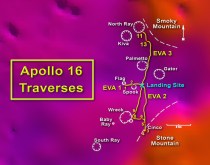

I’ve tried using the Exposure setting in Photoshop, with some success, but it always creates a border between the two areas that requires blurring and loss of detail no matter how careful I am. If anyone out there knows of a solution using Photoshop, such as how to automatically add a certain number to each color value in a selected area, then I’d appreciate you letting me know! I’m having one of my students, who is also in the 3D class and good at computer programming, develop a python script that can do this for us. I don’t want to use the automatic software on the data website, because it digests the data too much and won’t allow us to create our own textures and animations. Regardless, I have managed to do test animations in Bryce zooming in on the six Apollo landing sites, along with text showing the geographical surroundings. I’m including some images here. My astrobiology class will create 3D images for Mars sections tomorrow and my 3D class will create animations flying around the Moon in the next week. I’ll be able to show these to the CLOE people as a progress report.

Now we’re beginning to study Mars and its potential as a source of life. We’re working through the Mars lesson plans I developed earlier this year for the Mars Education Challenge sponsored by Explore Mars and the National Science Teachers Association. On Monday, October 24th, I had the opportunity to share my lesson plans with other teachers through an online webinar hosted by Chris Carberry and Artemis Westenberg of Explore Mars. Howard Lineberger, the first place winner, shared his lessons this last Wednesday, and Andrew Hilt, the second place winner, shared his in September. The whole Mars Education Challenge has been a wonderful opportunity, not only to go to the NSTA conference in San Francisco this last March, but also to be a part of a larger community of educators interested in teaching Mars exploration in the classroom. I’m also not done with the opportunities this program has provided; I’ve been invited to the launch of the Mars Science Lab, but I don’t have the funds to go (and I have a large video project to finish). This coming March, we will have the chance to spend several days in the Mojave Desert with Chris McKay doing field research. Chris has confirmed the dates, and I look forward to the experience, even if it is somewhere out beyond Zzyzyx Road at the end of the Earth.

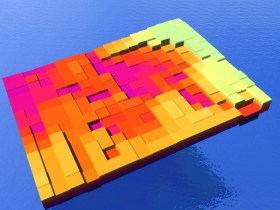

As part of the Mars lessons, my students have used a graduated lollipop stick to measure the height of locations in a hidden terrain box (modeling clay in a pencil box with holes drilled in the lid in a grid pattern). The measurements were written down and typed into a word processing program separated by commas. This data was saved as a .txt file and imported into ImageJ, a program developed by the National Institutes of Health to analyze biological images. ImageJ can turn the numbers directly into a grayscale image. One group used the numbers to cut drinking straws to the right length and imbed them into a layer of modeling clay to make a physical model of the terrain. They did quite well. The grayscale image was imported into Daz3D Bryce and turned into a virtual model, as seen here. Now we move on to actual data of Mars instead of simulated data only.

Thank you for sharinng